Introduction

SolidFire System Architecture

SolidFire storage is an interconnection of hardware and software designed for complete automation and management of an entire SolidFire storage system.

NetApp SolidFire Architecture

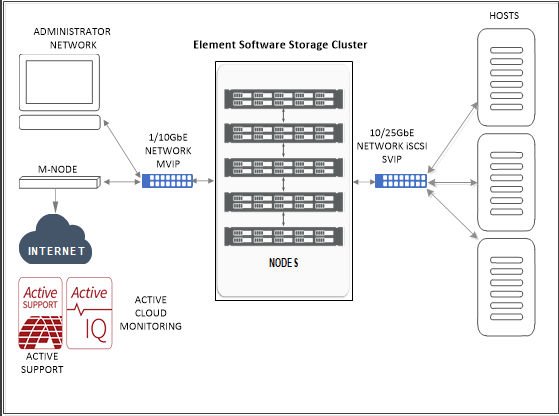

The following diagram shows the basic layout of the SolidFire Storage System and how it connects to a network:

Cluster, Nodes, and Drives

Cluster

Cluster is a hub of SolidFire Storage System and is made up of a collection of nodes. Minimum four nodes in a cluster (five or more nodes are recommended) are required to SolidFire storage efficiencies to be realized. A cluster appears on the network as a single logical group and can then be accessed as block storage.

Creating a new cluster initializes a node as the communication owner and establishes network communication across all nodes within the cluster. This process is performed once during the initial cluster setup.

One can increase the scalability of the cluster by additional nodes upto 100. Adding additional nodes does not cause any interruption of the service and the cluster automatically uses the performance and capacity of the new node.

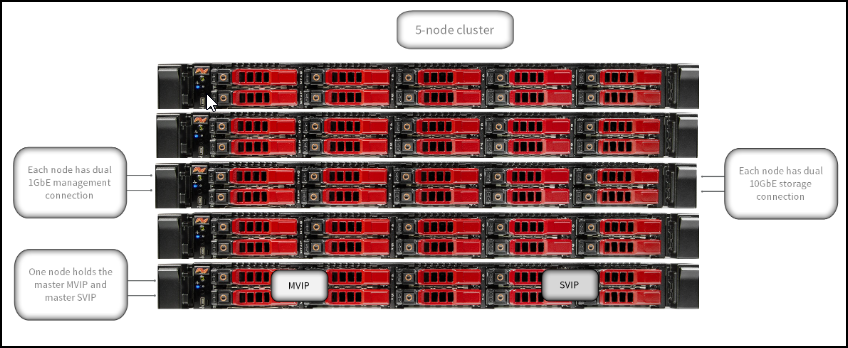

The following diagram illustrates the basic Ip Address layout for the cluster

Administrators and hosts can access the cluster using virtual IP addresses. Any node in the cluster can host the virtual IP addresses. The Management Virtual IP (MVIP) enables cluster management through a 1GbE connection, while the Storage Virtual IP (SVIP) enables host access to storage through a 10GbE connection.

These virtual IP addresses enable consistent connections regardless of the size or makeup of a SolidFire cluster. If a node hosting a virtual IP address fails, another node in the cluster begins hosting the virtual IP address.

Nodes

Nodes are individual hardware components that are grouped into a cluster to be accessed as block-storage. There are two types of nodes in SolidFire storage system. They are Storage Nodes and Fibre-Channel Nodes.

Storage Nodes

A SolidFire storage node is a server containing a collection of drives that communicate with each other through the Bond10G network interface. Drives in the node contain block and metadata space for data storage and data management.

Storage nodes have the following characteristics:

- Each node has a unique name. If a node name is not specified by an administrator, it defaults to SF-XXXX, where XXXX is four random characters generated by the system.

- Each node has its own high-performance non-volatile random access memory (NVRAM) write cache to improve overall system performance and reduce write latency.

- Each node is connected to two networks, storage and management, each with two independent links for redundancy and performance. Each node requires an IP address on each network.

- You can create a cluster with new storage nodes, or add storage nodes to an existing cluster to increase storage capacity and performance.

- You can add or remove nodes from the cluster at any time without interrupting service.

Drives

A storage node contains one or more physical drives that are used to store a portion of the data for the cluster. The cluster utilizes the capacity and performance of the drive after the drive has been successfully added to a cluster.

A storage node contains two types of drives:

Volume metadata drives: Store compressed information that defines each volume, clone, or snapshot within a cluster. The total metadata drive capacity in the system determines the maximum amount of storage that can be provisioned as volumes. The maximum amount of storage that can be provisioned is independent from how much data is actually stored on the block drives of the cluster. Volume metadata drives store data redundantly across a cluster using Double Helix data protection.

Note

Some system event log and error messages refer to volume metadata drives as slice drives.Block drives: Store the compressed, de-duplicated data blocks for server application volumes. Block drives make up a majority of the storage capacity of the system. The majority of read requests for data already stored on the SolidFire cluster, as well as requests to write data, occur on the block drives. The total block drive capacity in the system determines the maximum amount of data that can be stored, taking into account the effects of compression, thin provisioning, and de-duplication.

Fibre Channel (FC) Nodes

SolidFire Fibre Channel nodes provide connectivity to a Fibre Channel switch, which you can connect to Fibre Channel clients. Fibre Channel nodes act as a protocol converter between the Fibre Channel and iSCSI protocols; this enables you to add Fibre Channel connectivity to any new or existing SolidFire cluster.

Fibre Channel nodes have the following characteristics:

- Fibre Channel switches manage the state of the fabric, providing optimized interconnections.

- The traffic between two ports flows through the switches only; it is not transmitted to any other port.

- Failure of a port is isolated and does not affect operation of other ports.

- Multiple pairs of ports can communicate simultaneously in a fabric.

SolidFire Element OS Features

The SolidFire Element Operating System (OS) comes preinstalled on each node. SolidFire Element OS includes the following features:

- SolidFire Helix™ self-healing data protection

- Always on, inline, real-time deduplication

- Always on, inline, real-time compression

- Always on, inline, reservation-less thin provisioning

- Fibre Channel node integration

- Management Node LDAP capability for secure login functionality

- Guaranteed volume level Quality of Service (QoS): Minimum IOPS, Maximum IOPS, IOPS burst control

- Instant, reservation-less deduplicated cloning

- Volume snapshots:

- Snapshots of individual volumes

- Scheduling snapshots of a volume or group of volumes

- Consistent snapshots of a group of volumes

- Cloning multiple volumes individually or from a group snapshot

- Integrated Backup and Restore for volumes

- Real-Time Replication for clusters and volumes

- Native multi-tenant (VLAN) management and reporting:

- Virtual Routing and Forwarding (VRF)

- Tagged Networks

- Proactive Remote Monitoring through Active IQ

- Complete REST-based API management

- Granular management access/role-based access control

- Virtual Volumes (VVols) support for VMware® vSphere®

- Volume and system level performance and data usage reporting

- VASA support

- VMware vSphere® (VAAI) support

The following helps you get started.

To explore monitored metrics, see Supported Metrics and Default Monitoring Configuration.

To verify prerequisites and configure, see Working with NetApp SolidFire.

Key Use Cases

Discovery Use Cases

- Provides resource visibility to the administrator to view and manage resources available (Ex: Cluster, Nodes, Drives, Volume Accounts, and Volumes) under different resource types.

- Publishes relationships between resources to provide a topological view.

- For detailed structure, see the Resource Hierarchy section.

Monitoring Use Cases

- Publishes 39 metrics across categories such as Availability, Capacity, Performance, and Usage.

- Concern alerts will be generated for each metric to notify the administrator regarding the issue with the resource.

Supported Target Versions

Target device version 12.3.X

REST API version using 12.3.X

Resource Hierarchy

Cluster → Nodes → Drives → Volume Accounts → Volumes

Version History

| Application Version | Bug fixes / Enhancements |

|---|---|

| 2.0.2 | Latest release generated from SDK workbook metadata. |

| 1.0.0 | Initial discovery and monitoring support. |